What is Ollama?

Ollama is a powerful open-source framework that dramatically simplifies how you run and manage large language models (LLMs) on your own hardware. By packaging complex models like Llama 3 and Mistral into a lightweight, API-driven service, it puts state-of-the-art AI at your fingertips. This comprehensive Ollama guide covers everything from secure installation and model customization to API integration and advanced configurations. Whether you're a developer, researcher, or AI enthusiast, mastering Ollama is a critical step toward building private, powerful, and customized AI solutions.

Security Alert: By default, Ollama may expose its API on port 11434 to your entire network without authentication. This is a significant Ollama security risk, potentially leading to data leaks or unauthorized use of your compute resources. Before exposing Ollama to any untrusted network, ensure you have configured a firewall or set the

OLLAMA_HOSTenvironment variable (e.g.,OLLAMA_HOST=127.0.0.1) to restrict access to localhost.

How to Install Ollama

Getting started with Ollama is straightforward across all major operating systems. Follow the steps below for your specific platform.

Windows Installation

Download Link: https://ollama.com/download/OllamaSetup.exe

To install Ollama on Windows, simply download the installer and double-click the executable file to begin the installation process.

macOS Installation

Download Link: https://ollama.com/download/Ollama-darwin.zip

For macOS users, download the zip file, unzip it, and run the application to complete the installation.

Linux Installation

You can install Ollama on Linux with a single command:

curl -fsSL https://ollama.com/install.sh | sh

Note: If you don't have

curlinstalled, use your distribution's package manager (e.g.,sudo apt-get install curlorsudo yum install curl) to install it first.

Once installed, start the Ollama service to make it active:

sudo systemctl start ollama

Configuring GPU Acceleration

To get the best performance when you run LLMs locally, you should enable GPU acceleration.

NVIDIA GPU Support

To enable NVIDIA GPU acceleration with Ollama, you must install the NVIDIA Container Toolkit. Follow the official installation guide for detailed instructions: https://docs.nvidia.com/datacenter/cloud-native/container-toolkit/latest/install-guide.html#installation

AMD GPU Support

For AMD GPUs, you will need to use a container image that includes the rocm tag for ROCm support.

Running Your First Local LLM

Getting a model up and running is incredibly simple. The command below will automatically download and run Google's Gemma model, demonstrating how easy it is to run LLMs locally.

ollama run gemma

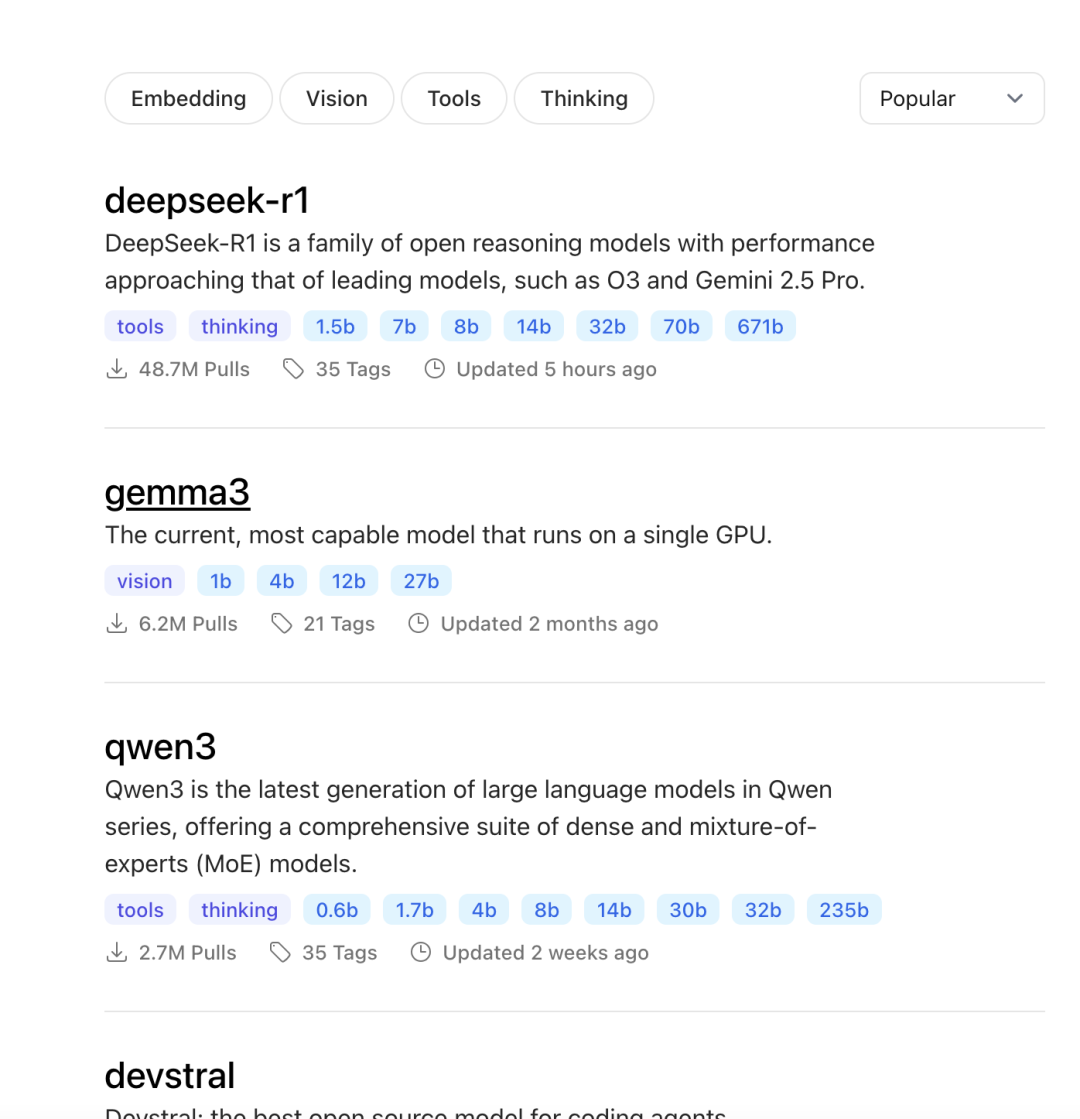

To see what other models are available, you can explore the full library on the official Ollama website: (https://ollama.com/search)

How to Customize Models with a Modelfile

Ollama truly shines in its ability to create custom models. You can import your own local models in formats like Safetensors and GGUF, apply model quantization to save resources, and create custom prompts using a Modelfile.

Importing LoRA Adapters

Low-Rank Adaptation (LoRA) is a popular technique for efficiently fine-tuning LLMs. You can easily import a LoRA adapter into Ollama.

-

Create a

Modelfile. This file defines your custom model. UseFROMfor the base model andADAPTERfor your local LoRA adapter file.FROM llama3 ADAPTER ./my-adapter.safetensorsImportant: For stable results, ensure the base model specified in

FROMis the exact same one used to train the adapter. It's also best to use a non-quantized adapter, as quantization methods can vary. -

Build the model. From the same directory, run

ollama create:ollama create my-model -f Modelfile -

Run your custom model:

ollama run my-model "What is a LoRA adapter?"

Ollama supports importing LoRA adapters for architectures like Llama, Mistral, and Gemma.

Importing Models from GGUF

GGUF is a file format designed for running LLMs on consumer hardware. Importing a GGUF model into Ollama is straightforward.

- Create a

Modelfileand use theFROMinstruction to point to your local.gguffile:FROM ./my-local-model.gguf - Build the model using the

ollama createcommand:ollama create my-model -f Modelfile - Test your imported GGUF model:

ollama run my-model "What is a GGUF model?"

Ollama supports GGUF imports for a wide range of architectures, including Llama, Mistral, Gemma, Yi, DeepSeek, and StarCoder2.

Quantizing Models for Efficiency

Want to run larger models on your hardware? Model quantization reduces a model's memory footprint and can speed up inference. It's a trade-off, sacrificing a slight amount of accuracy for significant performance gains.

- Create a

Modelfilethat references the base model you want to quantize.FROM llama3 - Use

ollama createwith the--quantizeflag to build a new, smaller model. Here, we quantize toq4_0:ollama create my-quantized-model -f Modelfile --quantize q4_0

Customizing System Prompts

You can bake a custom system prompt directly into your model to give it a specific personality or role. This is perfect for tailoring a model to your project's needs.

For example, to make llama3 act as a personal assistant, your Modelfile example would be:

FROM llama3

SYSTEM "You are a helpful and friendly personal assistant."

For a complete list of Modelfile instructions, see the official documentation: https://github.com/ollama/ollama/blob/main/docs/modelfile.md

Advanced Usage and API

Using Multi-line Input

For longer, multi-line prompts, you can wrap your text in triple quotes (""") when interacting with a model via the command line:

ollama run my-model """

Can you explain what Ollama is in a few sentences?

"""

Sample Output:

Ollama is a lightweight, extensible framework for building and running language models on your local machine. It provides a simple API for creating, running, and managing models, as well as a library of pre-built models that can be easily used in a variety of applications.

Integrating with the Ollama API

Ollama exposes a REST API for programmatic control over your models, allowing you to integrate local LLMs into your applications. You can find the detailed Ollama API reference here: https://github.com/ollama/ollama/blob/main/docs/api.md

Common Ollama Use Cases

- Local Development and Testing: Run, debug, and fine-tune open-source LLMs like Llama 3 and Mistral entirely offline, boosting speed and data privacy.

- On-Premise Deployment: Deploy models on local servers or in a private cloud for industries with strict data security requirements (e.g., finance, healthcare).

- Custom AI Applications: Build specialized AI tools by combining custom models with tailored prompts and parameters.

- AI Research and Education: Use Ollama as a low-cost, accessible platform to explore the inner workings of large models and experiment with fine-tuning.

- Edge and Resource-Constrained Environments: Run LLMs on dedicated hardware, like a local workstation with a consumer-grade GPU.

Limitations and Practical Considerations

- Hardware Requirements: Running LLMs is resource-intensive, especially on VRAM. Choose a model that fits your machine's capabilities.

- Model Management: You are responsible for managing your

Modelfileand model weights, which often rely on the open-source community. - Performance Bottlenecks: Local performance will not match large-scale cloud services. Response speed is limited by your hardware.

- Security and Compliance: While local deployment enhances data security, you are responsible for content safety and adhering to model licenses.

- Operational Overhead: Self-hosting involves ongoing costs for model updates, system maintenance, and server operations.

Conclusion: The Future of Local AI with Ollama

Ollama represents a significant step forward in making large language models accessible. By simplifying local deployment, it empowers developers to innovate freely, securely, and cost-effectively. While it's essential to be mindful of hardware limitations and Ollama security best practices, the ability to run, customize, and control powerful AI models on your own machine is a game-changer for the future of personalized and private AI applications.